Blogs By this

author

Related blogs

to this blog

- Comunet Case Study: South Australian Environment Protection Authority (EPA)

- Comunet Case Study: AWS Workspaces and Landing Zone for AFSS

- Comunet Case Study: Kid Sense Child Development

- Comunet Case Study: AWS Cloud Migration for DIT

- Comunet Case Study: TRUMPS Migration for DIT

- Embracing the Modern Workplace

- AWS Migration Competency – recognition of Comunet’s leadership and expertise in cloud migration services

- Myth Busting Cloud Security – How Secure is the Cloud?

- Comunet and AWS – delivering secure, scalable and trustworthy services non-profits

- Building innovation culture

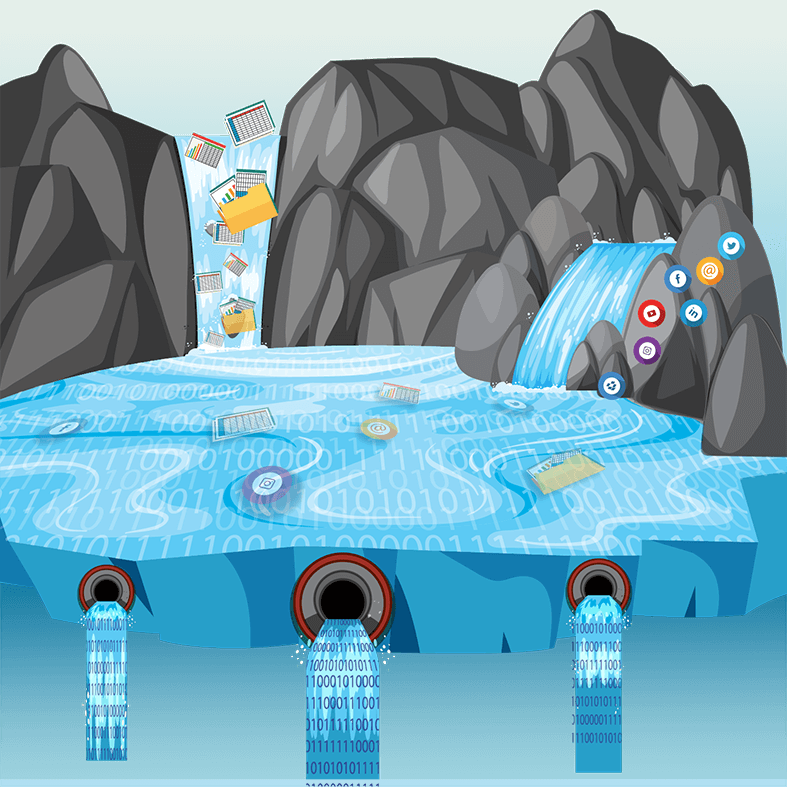

- Getting out of the swamp: Accelerated Data Lake on AWS

Data Analytics in its many forms has been a key part of the IT fabric for decades. The latest incarnation 'Big Data' regularly appears in the news and is in our consciousness, more than ever, as something we should be doing if we are not already. Many businesses make significant investment in data projects, to some degree of success, however few can consistently deliver a program of successful data projects that provide lasting value.

Businesses' love affair with data sits on an ever-changing pendulum - it seems to go hot, then cold, then back again with every change in technology and the next best offering. Some days data is the answer to everything. Some days it's merely telling us information we already thought we knew. Other days it’s a distraction and at worst, completely misleading and liable at making us bad decision makers.

If this changing sentiment is often how you feel about the value of data, it might not be the data that is at fault. It could be that your business does not have a consistent approach to the way it values data, and the processes that verify a data project's goals, key objectives, and outcomes.

The Changing Nature of Data

From my first day as a budding Software Developer, whether I fully understood it at the time, I was working with data. Of course, back then data was a by-product of an application. I just built "Software", data was just the necessary in and out of information that an application used.

One of my very first projects was to build a system to replace a 'green screen' Yield Recording System that captured the 'daily pick' (the daily yield in kgs of produce picked and weighed) at a Mushroom Farm.

The system I was replacing captured weight data from scales connected to one of many 'portable' terminals (computers on trolleys) and stored this data straight onto 3½ inch floppy disks. These 30 or so floppy disks would then be individually loaded onto a central computer. Invariably some of the floppy disks failed and guesses would need to be made on the missing data regarding what was picked, and by whom.

For the data that survived, the central computer commanded a Dot Matrix printer to print 30-odd reams of paper a day to report on the daily pick. The printing process alone took about 45 minutes and at the end of the print, the Business Manager would go to page 29, tear off the final page and "Voilà" - the business had a report on the totals for the day.

So, for my first project in data, it was not too hard to create value. I needed to build a system that didn’t use floppy disks and produced a report that totalled the daily pick (in substantially less than 45 minutes) and make it appear on screen. A side effect not greatly appreciated at the time, was that we probably also saved several forests a year in the process.

Like many applications at the time, the point was:

- 1. Make it faster

- 2. Make it more reliable

Add to that Capture more and report more and you have pretty much covered the perceived value propositions of all data projects throughout the first decade and a bit of this century.

The simple drivers of past projects are understandable as at the time, computing was slow, unreliable and constrained. Fixing these problems were simply practical incremental steps toward improving business efficiency. Today we often jump too quickly to discussions on how we can deliver "more" before we have really understood where value in data lies. This is because cloud technologies excel in more:

- - More ability to scale

- - More reliable storage

- - More easily managed

- - More available in the event of disaster

- - More secure

- - More performant

- - More compatible

- - More cost effective

- - More intelligent

- - More real-time

- - More portable

All of this is great, and clearly a significant improvement on all that has come before it, but at the heart of every modern data project before we jump to platform, should be purpose. If we get purpose wrong, we miss the greater opportunities, and our love affair with data will continue to swing at the whim of technology.

Having purpose is to have a clear expectation of what outcomes data can and will provide you, and to be more discerning on how you are going to achieve it. This starts by understanding the value proposition of data and what makes it valuable to your business.

The Value Proposition

For every data project you take on, you should be able to identify which of the value proposition category/categories below the project will target.

What is the value of data?

- - Provides explanation or informs opinion

- - Provides timely, actionable intervention

- - Measures progress to indicate trend

- - Predicts and explores alternatives otherwise too costly or infeasible to implement.

- - Saves time and makes decisions on our behalf

- - Improves the experience of our services, drives more engagement, throughout or value-add.

- - Is sellable

- - Drives innovation through experimentation

We then want a deeper objective. If we are using data to 'inform opinion' for example what is the tangible and intangible value of knowing that information, so that you could come to that opinion?

- - Can we measure the additional quality, time or cost associated with the possible outcomes the knowledge could provide?

- - What is the likelihood or risk associated with coming to the wrong opinion on the topic?

- - What additional value is having that information presented timelier, or brought to your attention as-it-happens?

- - Will having the information make all the difference in communicating the opinion to others (stakeholders, management, board, etc)?

- - Will the way in which this information is displayed make it more valuable?

We are likely to have a range of different use cases of what we could do with our data across all these value proposition categories. Some will on closer inspection be more worthy of the investment than others.

It is helpful to apply an Agile Methodology to your data problems. A backlog of "data problems worth solving" can be categorised from highest priority to lowest based on our understanding of the tangible and intangible value evaluated on any given problem.

Bad Data

When applying value to data, it's also helpful to consider it from another other point-of-view, when it is not valuable? Regardless of your purpose, if your data falls into the category of 'not valuable' you will not achieve your goals.

When is data not valuable?

When it:

- - Is imprisoned (poor format, legacy access, unreachable)

- - Is ambiguous or inconsistent

- - Has been tortured (too manipulated and removed from the context in which it was first acquired)

- - Is biased (represents a small subset of the subject matter - dark data)

- - Is made up

We spend a lot of time and energy mitigating issues of ‘bad data’ in business. It often requires significant effort to overcome issues associated with poorly accessible data or quality issues through data cleaning and complex ETL (Extract, Transform and Load) workflows. This effort almost always takes longer than you would estimate to remediate and often produces poorer results or compromise than we would like.

When you are evaluating "data problems worth solving" in your business, consider the state of your data. If a data problem is likely to include considerable ‘bad data’, factor this in as it diminishes the overall value your project is likely to deliver versus your effort.

With a list of data problems collated, consider deprioritising those deemed “too much effort versus reward” than those which use data with less remediation effort. Alternatively, tackle the problem head-on and don’t use Band-Aid solutions. Start with a dedicated project that fixes the core problems that made the data bad in the first place. This could mean a significant data entry, recollection and cleaning exercise, or even a source system replacement. It is a fool's game hoping that bad data can be "fixed in post".

To put data at the centre of your business, apply a process that evaluates and measures the value of your data initiatives before you undertake a data project. When you combine this process with the benefits of cloud technologies and the right architecture, you will have a more successful program of data projects and reap the most from your data.

Getting Help

Here are some suggestions that could help to improve your approach to data, and make your business "Data-Centric".

AWS provide a kickstart data strategy and implementation lab through AWS Data Labs program.

This is available in two flavours:

- - Design Lab

a 2-day lab focussed on understanding your data problems and architecting a plan that supports your data goals.

- Build Lab

a 3-5 day lab focussed on building out several of your high-value data problems in AWS to get up and running quickly and a framework to create repeatable process for approaching further problems yourself.

If you are looking for ideas of how to better apply value to your data problems, a good starting point is 'Data project checklist'. This post explains some of the breadth required to put in place a successful practice, culture, and capability for delivering repeatable valued projects in data.

Finally, if you are looking for help with your data strategy, we can navigate you through both the business and technical challenges, so you too can succeed in deriving lasting value from your data.

You can get in touch or drop me message on LinkedIn and start the conversation!